DDNA is designed around generic detection of subversive code. To do this, HBGary disassembles everything on-the-fly and pushes it through a sieve of regular expressions that match against control flow and data flow features. I thought it would be fun to delve into some specific examples.

As Martin recently pointed out in his blogpost, APT has started to use in-memory injections as a means to hide code. We have noticed remote-access functions injected and split over a range of memory allocations.

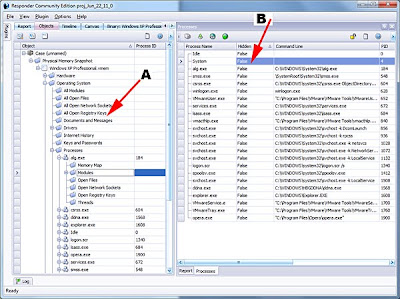

In the screenshot, you can see a dozen 4K (0x1000) allocations injected into explorer.exe. (Note: this type of activity can be detected using the free Responder CE.) Each page of memory only contains a tiny portion of the overall malware – something that would frustrate most AV scanners. However, the allocations themselves are suspicious to Digital DNA™, and in particular the last page has a suspicious code fragment that scores quite heavily in Digital DNA™. This illustrates why a filesystem-only view is not sufficient to detect APT tools. Many advanced techniques involve modifications to the running system and can only be detected in memory.

In this example, the hacker hasn’t hooked anything. Instead, he starts some additional threads to service the malware code. Even though the malware has been split over a dozen pages, the hacker has only started two threads. In this example, allocations #8 and #11 each host a thread subroutine. The other memory pages each hold specific subroutines. For example, one of the memory pages has a function for installation into the registry, while another has a function for hiding a copy of the malware in an alternate data stream. It’s these suspicious behaviors that Digital DNA™ is focused on detecting. Furthermore, it’s the behaviors being used together that will really light up color-coded DDNA alerts.

One suspicious feature is when code exists outside the bounds of a known module. This will occur if the hacker allocated additional space for storing an injected routine. This is commonly done using

VirtualAllocEx(), but can also be achieved using the stack of an injected thread. In the latter case, CreateRemoteThread() is used with a stack size argument large enough to store an injected routine. In either case, executable code is detected outside of a defined module, and this will score as suspicious by default even without further analysis.Moving further, however, injected code is typically handwritten assembly. In most cases, the operational code will not resemble known compiler patterns (such as code compiled by Visual C++ or Borland). In particular, the code may contain position-independent operations – function calls and data references that are designed to work independent of the address where the code lives in memory. These are further indicators of suspicion. In my experience, the only time this kind of code appears in a legitimate binary is when DRM is being used (DRM looks and smells like malware anyway).

To look back at our example, it had some interesting techniques for embedding data inline with code:

In the example, you see the “w32_32” string in use, but what makes this interesting is how the string is embedded inline to the code. Right before the string we see a short call that jumps over the string, and code execution continues on the other side. Again, this idiom is suspicious and can be detected generically, as opposed to reliance on a specific string or byte pattern.

In the case of Digital DNA™, code

16 30 detects short calls and jumps over inlined networking related strings. How did we get here? HBGary detected that some APT groups were producing this code pattern as a result of some code-level anti-forensics tools. This is exactly the kind of pattern that produces big wins on the detection side as the code is often cut-and-paste or the obfuscation is applied in batch to otherwise custom-compiled malware. (Of course, now that I’ve blogged about it they will switch off to another trick – it’s OK, we have thousands of traits to detect suspicious behaviors).Another example of handwritten code is the CRC function used by the hacker to load his table of function pointers. This CRC-based technique has been around in shellcode for a long, long time (digression: I think I released the first public CRC loader in shellcode in the early 2000’s – it was 32-bit CRC. Thinking back, Halvar Flake publicly released a better and smaller 16-bit CRC loader in shellcode shortly afterward. The technique has been written about many times since).

The routine that actually calculates the CRC is usually hand-made – so it too can become a form of attribution. But even if it’s not hand-made, the proximity of CRC to a

GetProcAddress() call would be indicative of this pattern. In our APT example, the author has created a CRC for loading a function table:

The CRC calculation is referenced from a routine that is rolling through

KERNEL32.DLL and calling GetProcAddress(). This pattern screams for attention “Hey! I’m malicious!”

So again, Digital DNA™ for the win. The CRC can be detected using a generic method, and when detected in control flow in proximity to

GetProcAddress() loop, it scores hot with trait C3 F7.These are just some examples of how Digital DNA™ focuses on analyzing the code itself, as opposed to blacklisted MD5’s or ASCII strings. It is not possible to specify these behavioral patterns with simple languages like OpenIOC or even ADXML (Active Defense’s XML for scan policies) – they can only be detected programmatically. That is why our product Active Defense doesn’t depend on IOC’s alone to do the job – in fact, Active Defense starts with full physical memory analysis and Digital DNA™ sequencing. IOC’s come second and only if the user wants to extend the default detection capability with custom threat intelligence. The two methods work well together, Digital DNA™ to detect new and unknown threats, and IOC’s as a follow-up sweep for known APT behaviors.

Using IOC’s effectively

One of the reasons we invented Digital DNA™ is because IOC’s alone aren’t good enough. A problem arises when IOC’s are only used to detect known threats. Think about this – if your IOC’s are just a blacklist of recently discovered malware MD5’s and unique strings then its equivalent to a small AV dat file. Even though IOC’s can be used to detect TTP’s (i.e., scanning the enterprise for split RAR archives or recent use of ‘net.exe’) we generally see them employed to detect specific malware files. If your organization has a database of IOC’s then look for yourself. How many entries have MD5 checksums? How many are specific to a malware sample, a specific registry key used to survive reboot, etc? If you see an overabundance of these signatures then beware – this is the same old blacklist-driven security model that has been failing us for over 10 years now. On the other hand, if you are using IOC’s to scan for more generalized things, such as command-line usage, access times on common utilities, executables in the recycle bin, etc., then you are on a far better trajectory. I support open intelligence sharing, but I caution you against falling into the “magical strings” bucket. Too often our industry shares threat intelligence in the form of blacklisted MD5’s or IP addresses – this kind of threat intelligence is nearly useless.

HBGary’s managed services team generates many IOC’s in the course of their work, and I am happy to say that we share all of them with our Active Defense customers – we don’t keep them secret. They are provided automatically in the form of a library that is auto-updated. Customers can pick and choose from many search definitions and use these as a basis to create their own custom searches. Our team tries to steer away from malware-specific indicators, and instead focuses on the generic attack patterns that can be detected at the host. We give these to our customers because we want them to get the most from our software. We enable people to be self-reliant.

When you use Digital DNA™ and IOC’s together, you aren’t relying on a “magical bag of strings” that go stale every two months. Instead, you are detecting new threats and then using IOC’s to apply attrition against the attacker’s persistence. This is a strong defensive position. This is why our proven behavior-based solution approach is increasingly winning us new customers – even unseating our competition in many accounts.

-Greg